Emotion Recognition CNN

This project implements a sophisticated Convolutional Neural Network (CNN) trained on the FER2013 dataset to recognize seven distinct human emotions. The system integrates advanced image preprocessing (data augmentation) with a deep VGG-style architecture featuring multiple convolutional blocks, batch normalization, and dropout layers for high generalization. Beyond classification, the project features a complete inference pipeline using OpenCV and Haar Cascade classifiers to perform real-time face detection and emotion overlay on video files.

Key Features

- Deep CNN Architecture: Designed a 4-block Sequential model with 2x Conv2D layers per block, utilizing 32 to 256 filters to capture complex facial features.

- Data Augmentation Pipeline: Implemented real-time image transformation (rotation, zoom, horizontal flip) using Keras ImageDataGenerator to mitigate overfitting on the FER2013 dataset.

- Face Detection Integration: Integrated OpenCV’s Haar Cascade Frontal Face algorithm to dynamically identify Regions of Interest (ROI) before feeding them into the neural network.

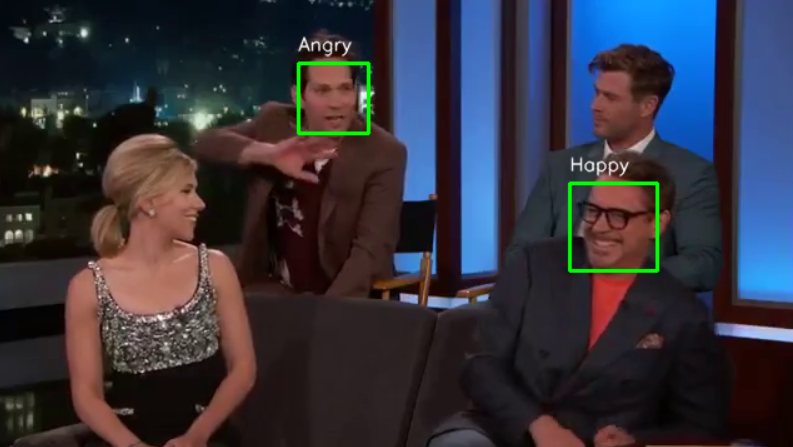

- Real-Time Video Inference: Developed a video processing script that performs frame-by-frame facial detection, emotion prediction, and visual annotation, saving the output as a processed .avi file.

- Model Performance Analytics: Leveraged Seaborn heatmaps for confusion matrix visualization and Scikit-learn for detailed F1-score and precision/recall classification reporting.

- Production-Ready Serialization: Implemented model saving/loading protocols using JSON architecture and HDF5 weight files for easy deployment across different environments.

Tech Stack

TensorFlow / KerasOpenCV (Computer Vision)PythonNumPySeaborn & Matplotlib (Data Visualization)Scikit-learn (Evaluation Metrics)

Screenshots