Medical QA Fine-Tuning

A fine-tuned GPT-2 model trained on medical question-answer datasets to generate domain-specific responses. The project includes full training pipelines, evaluation metrics, and deep analysis of model behavior using embedding similarity and layer-wise comparisons.

Key Features

- Custom dataset preprocessing and cleaning using pandas and HuggingFace datasets

- Fine-tuning GPT-2 (distilgpt2) on medical Q&A data

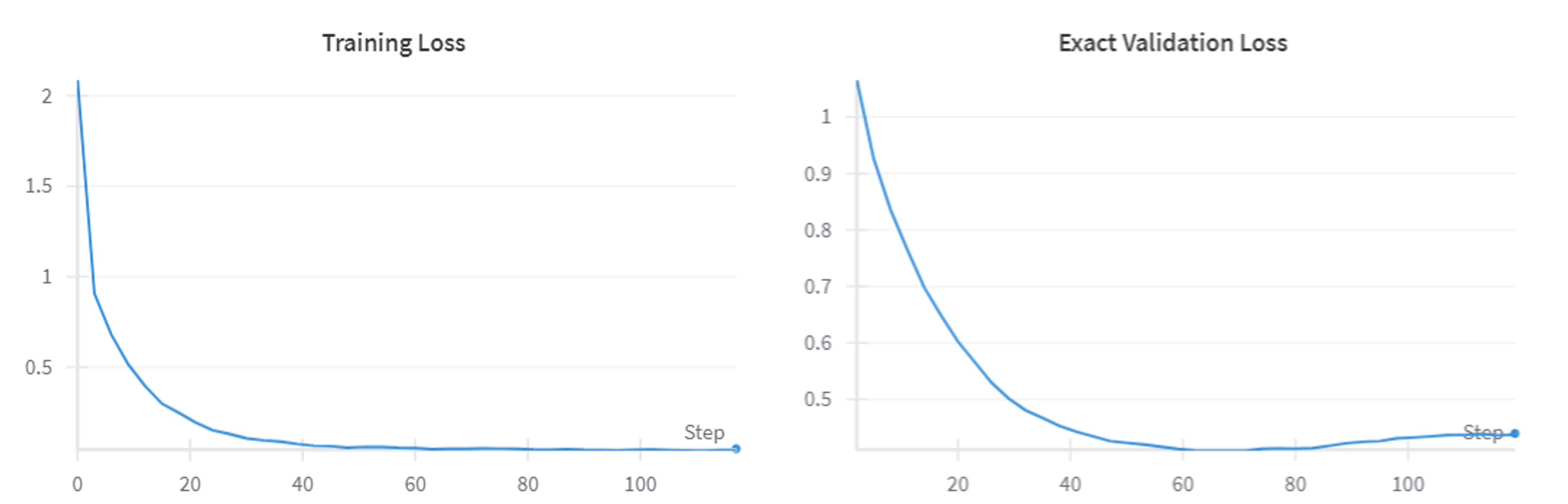

- Training pipeline with PyTorch, DataLoader, and batching

- Experiment tracking with Weights & Biases (wandb)

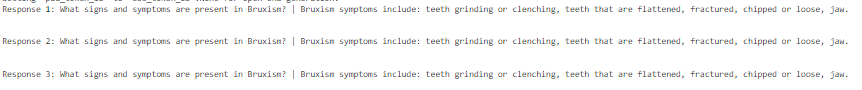

- Text generation with sampling (top-k, top-p, temperature)

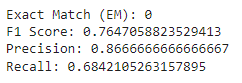

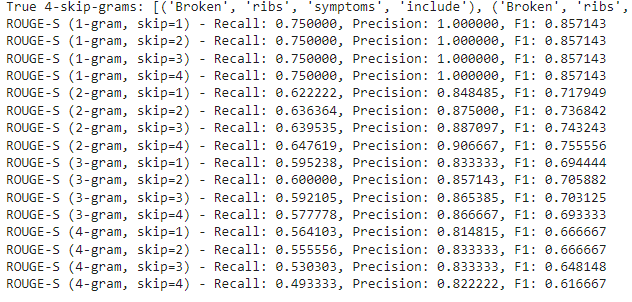

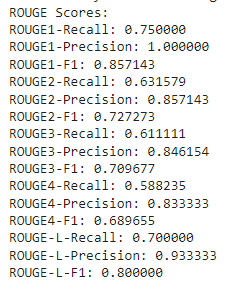

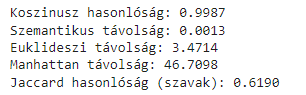

- Evaluation using F1, Precision, Recall, Exact Match, BLEU, and ROUGE

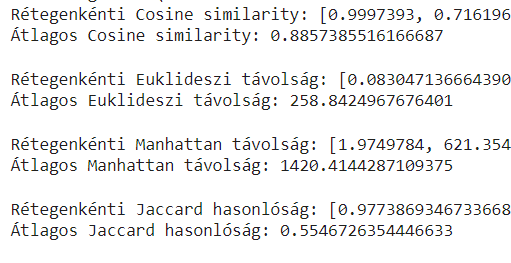

- Semantic similarity analysis using embeddings and cosine similarity

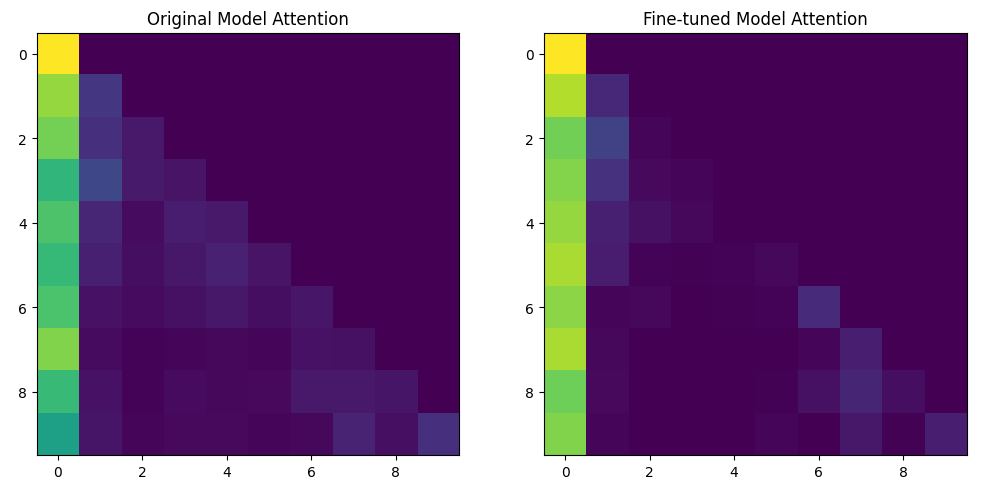

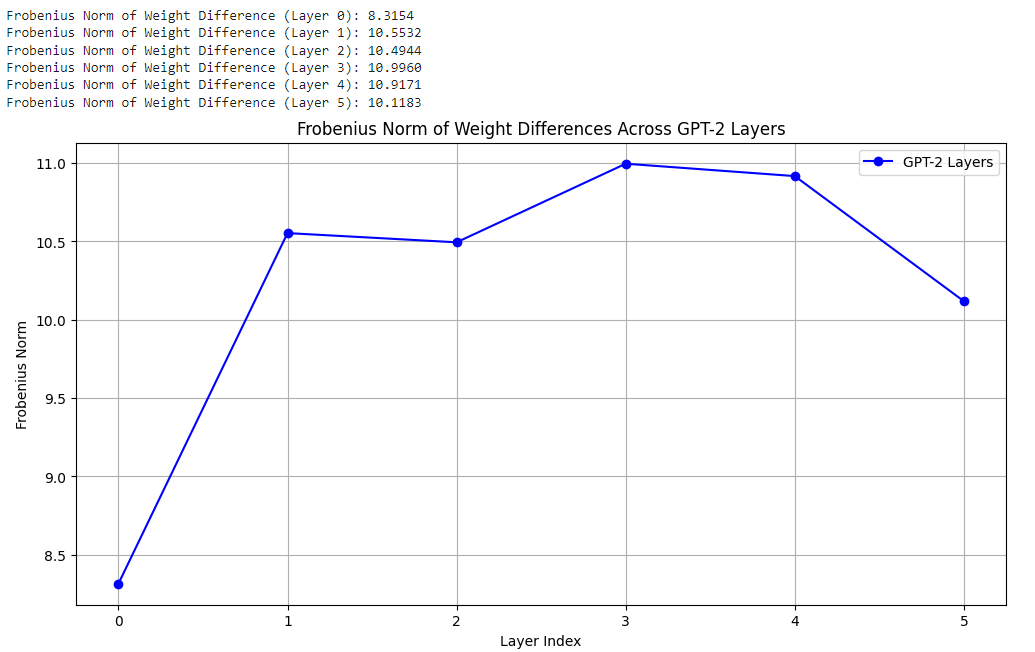

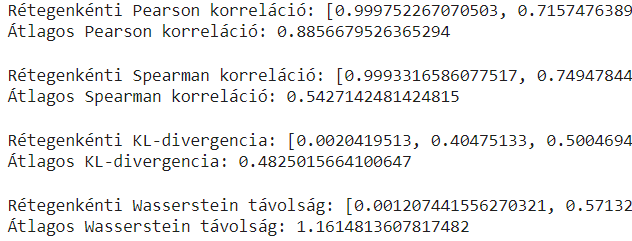

- Layer-wise analysis of fine-tuned vs base model (cosine similarity, CCA, Frobenius norm)

- Visualization of attention maps and training metrics

Tech Stack

PythonPyTorchHuggingFace TransformersDatasetsPandasWeights & Biases (wandb)NLTKScikit-learnMatplotlib

Screenshots